Sophia Danis, Leila Farhi, Chaya Manjeshwar, James Moon

Have you ever seen a compelling video online, only to be told that it was likely AI-generated? Have you looked at a beautiful photograph of a place that doesn’t exist? Whether you know it or not, you have likely interacted with AI-generated content online quite frequently. Since the release of ChatGPT and other large language models (LLMs), social media has become inundated with a flood of artificially generated content, prompting the question of how much online language content is influenced by the models. Fortunately, there are ways to distinguish AI-generated material from authentic, human-made content. This article discusses the linguistic basis of some of these recognition methods, as well as the ways in which college students differentiate between AI video scripts and those written by humans. Our findings suggest that, while students have methods they employ to detect AI usage, these means are not highly reliable and vary greatly from person to person.

Keywords: Artificial intelligence, social media, short-form content, videos

Introduction

Artificial intelligence, or AI, has rapidly become part of online culture. Tools like ChatGPT, Claude, Grok, and many more are more commonly being used to assist in the creation of social media content, from editing minute details to generating entire videos. However, research suggests that AI has different patterns of “speech” when compared to humans. For example, one study notes specific words that are overrepresented in AI-generated language, including surpass, align, and exhibit (Anderson et al., 2025). In addition to specific words, AI tends to use more emotionally vibrant language than humans (Markowitz et al., 2024). Despite this research, little is known about whether everyday users can actually recognize these features in social media content. Our research group proposed that these features would be recognizable by humans, whether consciously or subconsciously, and they would then be able to distinguish between AI-generated content and human-made content. Specifically, we expected to see people use certain word choices as evidence that a script was generated by AI. We chose to evaluate college students for this task because many students have had consistent exposure to AI as well as social media content, so naturally, we expected them to be the most reliable in evaluating whether or not a piece of content was scripted by an LLM.

Methods

To test whether people can detect AI in online scripts, we created a survey that was then administered and answered anonymously by twenty-one college students. To prime them for the questions we would ask, we first asked how confident they felt in identifying AI-generated content in general. We then asked what specific markers each person uses to discern whether something is AI-generated and if participants felt that their own speech and writing had been impacted by AI. These questions were intended to get the respondents to think more deeply about how they evaluate content, both AI-generated and human-made.

After this, we showed the participants two TikTok videos (Rutkowski, 2026; Frka, 2025). The first video had markers of AI usage in its word choice; certain words have been shown to be overused by AI (Anderson et al., 2025), one of which is “demographic,” which was present in the first video’s script. The video was also released after 2023, which means that ChatGPT and other LLMs were already being commonly used, and the video’s script could have plausibly

been generated by AI. The second video was also released after 2023 and could have been influenced by AI scripting, but had none of the target words that are most frequently overused by LLMs. The participants were then asked to evaluate whether they thought AI was used to script each video, and if so, what markers of AI they noticed. Both videos that we selected were informal and conversational, positioning the creator as an expert on their specific topic while also maintaining approachability.

Our study was limited by the fact that we only had twenty-one participants who were all in college. We also recognize the limitations of influencers online not disclosing whether their content was made using generative AI, so we have no definitive way to know whether either video was actually scripted with AI. However, since the focus of our research was centered on how people interpret videos regardless of whether AI was actually involved, this issue has no

bearing on the conclusions we reached.

Results

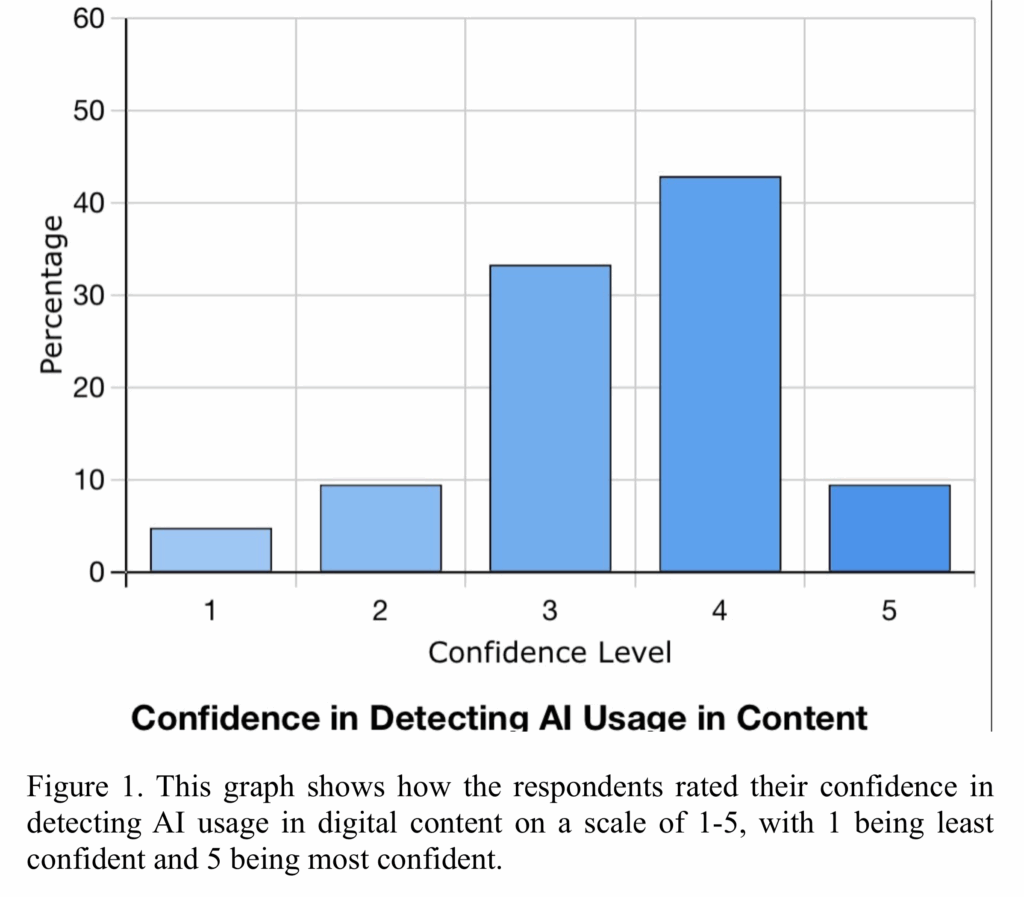

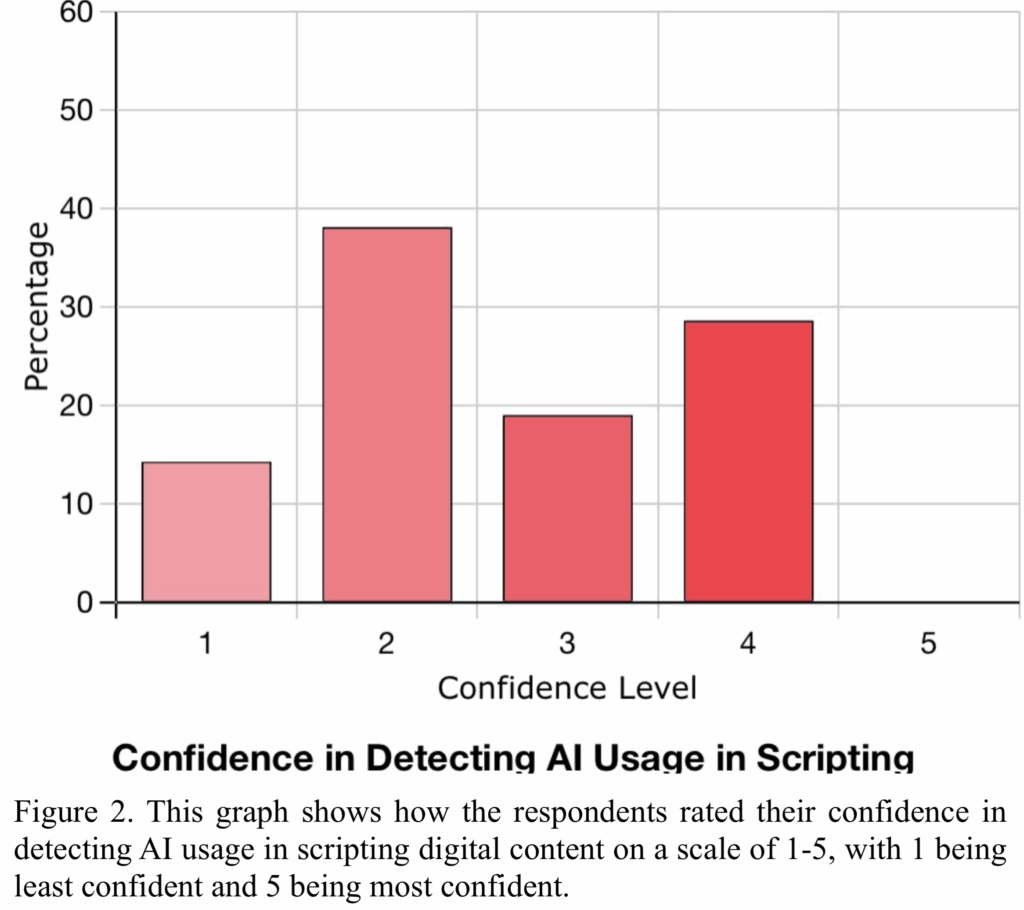

The first question that we asked respondents was how confident they felt in detecting when AI had been used to create social media content. We did not specify any particular type of content or method of AI generation, so this question applies to videos, text-based posts, photos, and speech. Figure 1 shows that most people rated themselves as moderately to highly confident, with 5 being most confident and 1 being least confident. However, when asked about detecting AI specifically in the scripts of videos where a creator is speaking, participants were significantly less confident (Figure 2). None of the people who responded to the survey said that they were highly confident in discerning whether AI had been used to script a video.

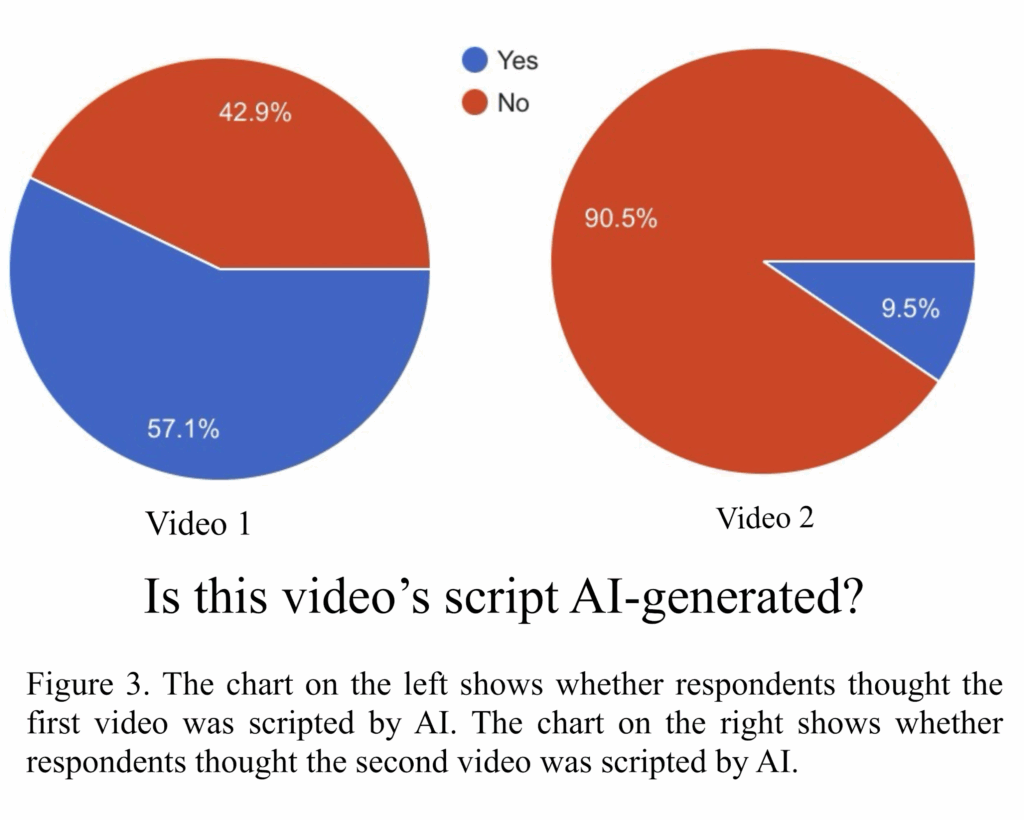

We then asked participants to view the two TikTok videos. Figure 3 displays pie charts of the proportion of participants who thought each video’s script was AI-generated. The first video, which did have speech that we know to be overused by LLMs, got mixed responses. Over half of the participants believed that it was scripted by AI, but nearly 43 percent said that it was not. The second video, which did not have markers of AI scripting, was much

less divisive. Nearly all of the participants concluded that it was not AI-generated.

Whenever participants thought that a video was scripted with AI, they were prompted to explain what led them to that conclusion. For the first video, only one participant actually pointed out a specific word that the creator used, that word being “snowball”; all other responses detailed sentence structure and the general flow of the video. In terms of the second video, participants said that the speaker seemed more real, but did not provide reasoning for this opinion. Another question that we asked was whether participants thought that their own speech had been influenced by AI. In keeping with the theme of our hypothesis, we were especially interested in whether participants had noticed themselves using certain words more or less often than they had before the popularity of LLMs. Those who responded that their speech had been changed by AI cited grammar differences, with some saying it was a helpful tool for editing papers and others noting that they avoided certain phrases in order to sound more human. No respondents mentioned any specific words that had entered or left their vocabulary.

Conclusions

Contrary to our expectations, the data collected shows that college students generally do not use specific word usage as indicative of AI writing. However, this does not mean that they have no way of distinguishing between AI-generated and human-made content. Instead of pointing out words, participants looked to phrases and overall sentence structure to speculate on AI language usage. One commented that the language felt overly dramatized, echoing the research and conclusions of Markowitz et al. Another respondent said that the sentences paused or ended in unnatural places. By contrast, those who commented on the second video said that the speaker seemed more authentic without providing any specific examples of how they reached that conclusion.

These findings demonstrate that individuals each have their own criteria for judging whether dialogue is produced by AI; however, these criteria may be based on preexisting biases about how AI or humans are expected to sound. This is especially true of the first video, where participants’ opinions on AI scripting are split nearly in half. Everyone who thought the video was scripted with AI did provide reasoning for their answer, but since the reasons were different, we cannot confidently conclude that there is a “correct” or singular method for individuals to identify when online content creators use AI to write their videos. We can, however, say that our participants have a certain language ideology when it comes to recognizing AI. Rather than pointing to words or features that have already been proven to be overused by AI, participants instead based their responses on assumptions of how they think AI should sound.

Refrences

Anderson, B., Galpin, R., & Juzek, T. S. (2025). Model Misalignment and Language Change: Traces of AI-Associated Language in Unscripted Spoken English (arXiv:2508.00238). arXiv. https://doi.org/10.48550/arXiv.2508.00238

Frka, C. D. [@PossiblyATherapist]. (2025, December 26). Change is uncomfortable and therapy is about change so there are most likely going to be some periods of discomfort [Video]. TikTok. https://www.tiktok.com/@possiblyatherapist/video/7588290424079486263?q=therapy&t=1770430607744

Markowitz, D. M., Hancock, J. T., & Bailenson, J. N. (2024). Linguistic Markers of Inherently False AI Communication and Intentionally False Human Communication: Evidence From Hotel Reviews. Journal of Language and Social Psychology, 43(1), 63–82. https://doi.org/10.1177/0261927X231200201

Rutkowski, G. [@attemptedsoc]. (2026, January 28). this is more context to the other vid i did last week! this idea is SO fascinating to me #medialiteracy [Video]. TikTok. https://www.tiktok.com/@attemptedsoc/video/7600577414883773726?q=science%20influencer&t=1770339976176

Tudino, G., & Qin, Y. (2024). A corpus-driven comparative analysis of AI in academic discourse: Investigating ChatGPT-generated academic texts in social sciences. Lingua, 312, 103838. https://doi.org/10.1016/j.lingua.2024.103838